I have reimplemented Sanic HTTP handling in pure Python and actually gained 20 % faster performance (53000 req/s against 44000 req/s without these changes), with several hundred lines less code than the old implementation. This is a large patch with implications to all Sanic users, so I would invite public discussion on how the new API is going to look like, and on how much havoc are the changes creating in existing applications.

The pull request is here: https://github.com/huge-success/sanic/pull/1791

Please do test it, particularly if you are developing extensions or larger applications based on Sanic. Let me know what problems there are, and we’ll see how they are best fixed.

The main motivation for this was to make streaming a first class feature: now all requests and responses are streamed and there is no separate implementation for non-streaming mode. Simple responses returned from handlers still work as before because Sanic automatically handles the streaming outside of the handler call.

The new streaming API does not require callbacks and also fixes a long-standing problem with request maximum size because streaming handlers now have the opportunity to adjust maximum size prior to receiving request body:

@app.post("/echo", stream=True)

async def echo(request):

# Lift the size limit

request.stream.request_max_size = float("inf")

# Spawn a streaming response

response = request.respond(content_type=request.content_type)

# Stream request body back to sender

async for data in request.stream:

await response.send(data)

Callback-based StreamingHTTPResponse is still supported, but deprecated in favour of the new API.

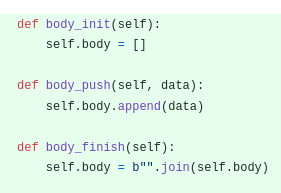

Reading and writing data is implemented with async send/receive functions which avoid some buffer queues and allow for automatic backpressure control. Another interesting effect of this is that only the server code depends on asyncio facilities in any significant manner. Because of this it becomes much easier to implement alternative backends such as a Trio server.

The pull request has a more comprehensive listing of things removed, possibly broken or otherwise affected, so please have a look if you believe this might concern you. Most importantly, again, please do test this with your stuff.

If all goes well, I expect this to land in Sanic 20.6, released in June.